I’ve blogged several times about Domino views, their structure and processing patterns. We were tasked in the current V12.02 effort to research the performance of incremental view updating once again. The former blog post about the inline feature remains in force and that is a proven and sound method to shrink the cost of refreshing views. But it has 2 drawbacks:

- It does slow document updates some

- It forces knowledge of which views are most contentious onto administrators and application developers.

We were in search of a more global approach, one that compromised nothing and cost no extra work.

Lots of suggestions to outright replacing the indexing engine have been made. Indeed, this had been done as part of the ill-fated NSFDB2 project in the early 2000s, with varied success. Principally, though the internal BTree index API is somewhat generic, specialized operations like using nested ordinal values to find index entries are not widely supported. And of course, the project to gut and replace such a central facility is large and risky. So we had to commit to lesser ambitions for now.

Our study was focused on the multi-thread log jam introduced when too many threads (think users PLUS utilities like the update task) simultaneously try to “refresh” views (where as always “refresh” is a euphemism for “update”). Referencing the diagram in the header above, the way it works is this:

- Thread T4 attempts to refresh the view, which requires locking the view exclusively.

- Thread T1 is already updating the view and so, has the lock

- Thread T2 and T3 are in queue to search the view and read some entries, respectively

Thread T4 must wait until T1 completes its updates and threads T2 and T3 complete their read operations. Since T2 and T3 are only reading data in the view, they obtain share locks and run simultaneously, whereas T1 and T4 must be the only threads updating the view at a time. The honoring of a thread’s order in the queue – whether a thread is reading or updating – is called “fair read/write” internally, and it’s orderly and well, fair. But if T1’s updating, or T4’s updating lags, then the queue gets long and humans waiting for their views to refresh go for coffee. And, of course, it’s not a problematic queue till there are at least 25 threads in the game, and I’ve seen over 100 at times. So very obviously, shrinking the work that updating threads do increases throughput and postpones coffee breaks. Could we do that?

The (somewhat surprise) answer was “YES!”. Due to a product identity crisis (which frankly still goes on), Domino has been treated exclusively as either a mail or application server. Applying all the updates one can makes a great deal of sense for mail; get that inbox as current as you can. But in the application space, there’s a time aspect that wasn’t being honored.

To determine whether a view needs refreshing, the current modified time of the database is compared to the last view update time. Domino uses times extensively which is smart as implicit data governing all kinds of things. Once the decision is made, however, the current modified time of the database to begin with is discarded and view updating finds whatever time it can once it is given control in the queue. That is, it can start in the future from its original timestamp and conclude even further in the future. This can be milliseconds or seconds from its original decision point. It’s well and good to get the view updated, but this is excessive, particularly when other threads – representing people at their computers – are waiting to access the data.

So, we asked what the results would be with two changes:

- Always end the search for documents to be added to the view at the database modified time when the thread was put into the queue.

- Check to see if the required updating has already been done by another refreshing thread before updating the view and defer the operation to later threads in the queue

Coming up with a transactional mix that made the famous log jam happen was harder than the 2 changes above. We varied the document update frequency from 6 per second to about 20 per second. Domino is no OLTP transactional engine, nor was it designed to be. Once we ran the tests, we found the results compelling.

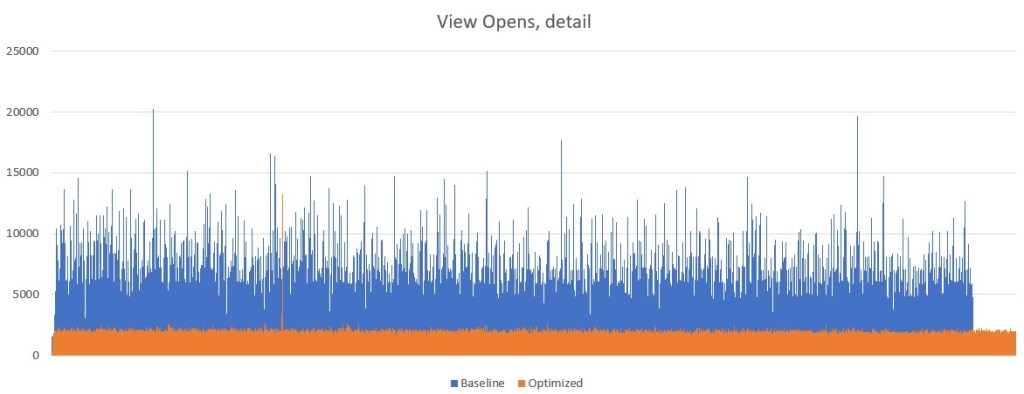

We measure the time it takes to open a view – that is the time spent updating the view and holding the lock – across the run. Here is the graph of thousands of OPEN_COLLECTION transactions doing view refreshes at 6 document updates/second. The test database contained 200K documents. Timings are in milliseconds (seconds * 1000).

The goal is to eliminate the spikes – the long wait times in the queue. There is tolerance to give up some milliseconds in average transaction times. And, a pleasant side effect is more work done in a given time (see the Optimized activity after the baseline ends).

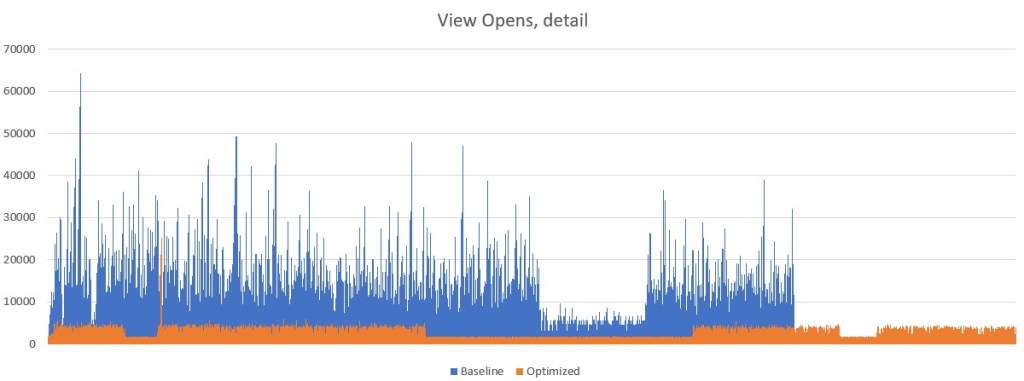

Here is the graph at about 13 document updates/second

Now, there are places where the optimized load sees a 10-20 second spike but the improvement is starkly apparent.

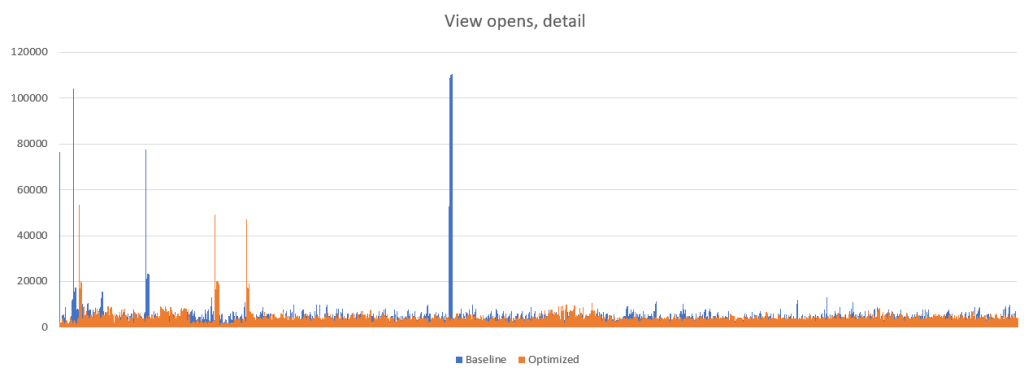

Finally, at an update rate of around 20 documents per second, we had these results

At this level, the test load created spikes greater than 40 seconds in both baseline and optimized code. The peak baseline spike was actually several minutes, but it has been adjusted for better optics. This amount of streamed updating reached a peak on the hardware in play.

There is, of course, much more in play in updating views. The cost of permutation, document size and complexity and automated streaming updates all cause results to vary, but we believe we have a significant improvement to an old problem and we’ll let you be the judge of that.

In the v12.02 Early Access program, the optimization is available using

ENABLE_VIEW_MIN_UNTIL=1

in notes.ini. Please feel free and encouraged to try it out!